Your marketing team isn't resisting AI. They're out of capacity.

.svg)

In partnership with:

Featuring

Featuring

How are you going to stand out in 2026?

Buyers are in control of the buying process. Over 70% of it is happening online. And they are putting companies on their short list of vendors that provide value and establish trust with their content.

How are you accounting for this in your 2026 strategy?

You need to stand out. To make sure your buyers notice you. Let us help you build that into your strategy for next year -->

%20(1).webp)

The Revenue Marketer

Your marketing team isn't resisting AI. They're out of capacity.

Still chasing 10% efficiency gains?

2023-2025 were the years of experimentation with AI. 2026 is the year to make it transformative. If you're still trying to figure out the strategic advantage AI will bring to your team, we're here to help!

When every seat is already full, AI adoption fails. Not from resistance, but from overload. Here's what CMOs who are making real progress do differently.

Most AI adoption efforts in marketing don't fail from resistance. They fail because the people expected to lead the change are already working at 150%.

I keep having a version of the same conversation with marketing leaders. A CMO comes back from an AI conference energized. She's not skeptical. She's ready to move. Her first call: 'We need agents.'

Then comes the hard part.

Her team is already maxed out. Campaigns are in flight. The pipeline number hasn't changed. And now AI is supposed to happen on top of all of it.

This is the tension more CMOs are navigating than are willing to admit. And it points to something most AI adoption frameworks get completely wrong.

The biggest barrier to AI success in marketing isn't lack of ideas or lack of tools. It's a lack of capacity to rethink how work gets done while the work keeps coming.

Why AI adoption stalls before it starts

When teams are stretched, they default to what feels actionable: buy tools, run training sessions, brainstorm use cases, build an agent.

None of this is wrong. But it's also how teams stay busy without getting better.

The pattern is consistent across B2B marketing organizations. AI experimentation is happening. Individuals are faster. Teams feel like they're moving. But the organization feels no more decisive, no more aligned, and no closer to results that accumulate over time. The gap between effort and impact keeps widening.

Why? Because AI doesn't correct broken processes. It amplifies whatever is already there, including the dysfunction.

If your workflows are fragmented, siloed, or unclear about who owns what judgment, AI makes those weaknesses run faster. Not better.

Most marketing organizations have approached AI like a productivity initiative. Individuals experiment. A few workflows improve. Nothing fundamentally changes. The constraint is rarely the technology. It's the operating model.

AI fatigue is real. Here's why it makes sense.

Here's what makes this harder than it looks: AI may be making individual tasks faster, and yet work can still feel heavier.

Speed raises expectations. When AI drafts a first pass in seconds, the expectation becomes: produce more, faster, with fewer resources. Time saved in one place gets immediately refilled with more output demands, more review work, more context switching, and more decision fatigue.

Teams can be both more productive and more exhausted at the same time. That's not a paradox. That's what happens when efficiency gains are reinvested into higher output demands without any deliberate change to how work is structured.

For CMOs leading AI adoption, this creates a specific challenge. You're asking already-stretched teams to learn new tools, redesign workflows, and adapt to new expectations while the campaigns still have to go out.

AI adoption fails when it becomes one more thing for overloaded teams to do. It succeeds when leaders redesign work so AI reduces load instead of adding to it.

The trap most leaders fall into

The instinctive response to AI underperformance is more activity: more tools, more training, more pilots.

But training doesn't redesign work. Documentation doesn't enforce standards. Hiring doesn't solve coordination. These moves feel productive because they're visible: they generate Slack threads, status updates, offsite energy. But they don't change how marketing actually operates. And operating change is the only kind that creates compounding benefit.

Here's the thing most marketing leaders won't say out loud: you've been running experiments. Your boss thinks that's the same as making progress. It isn't.

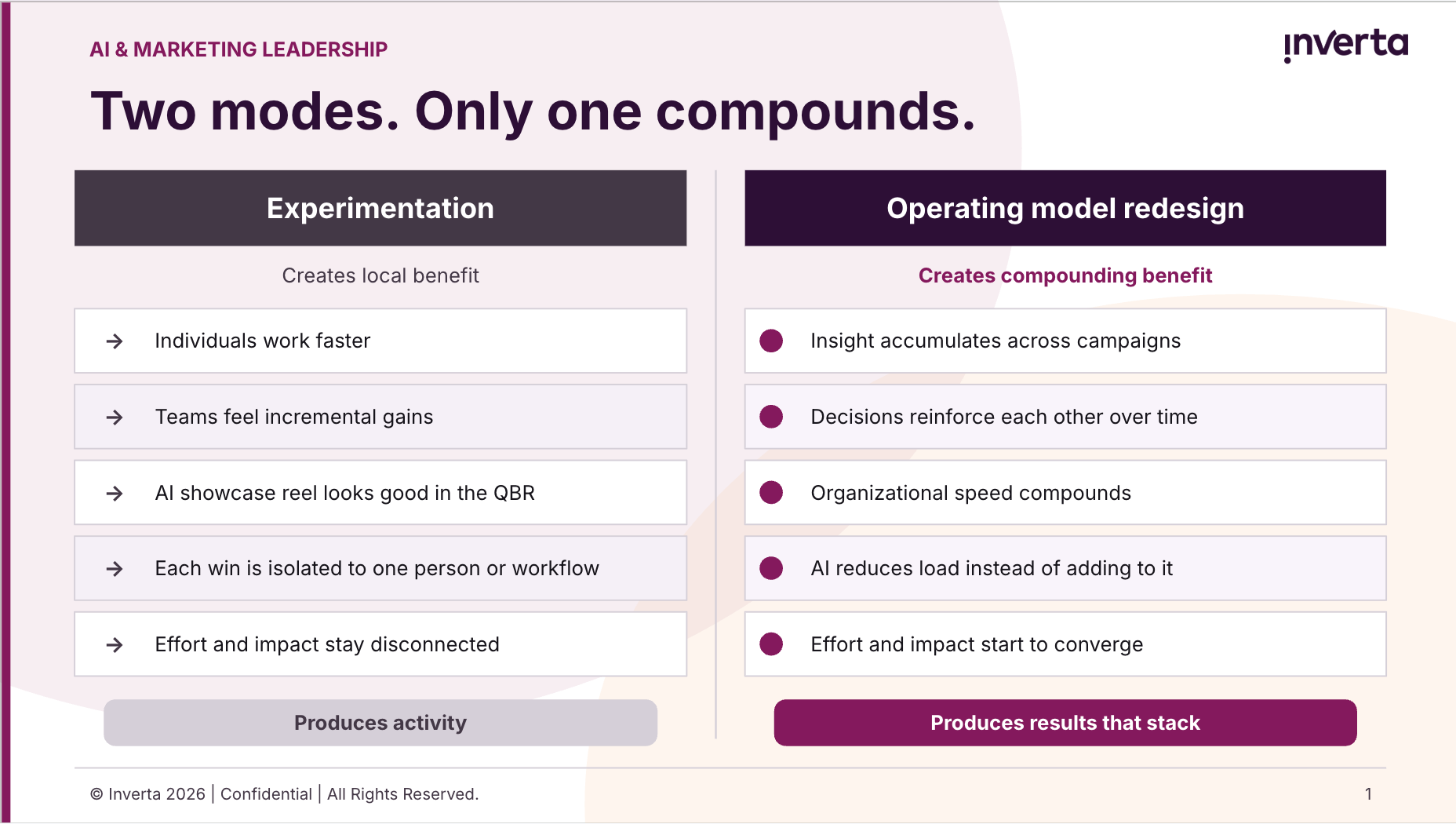

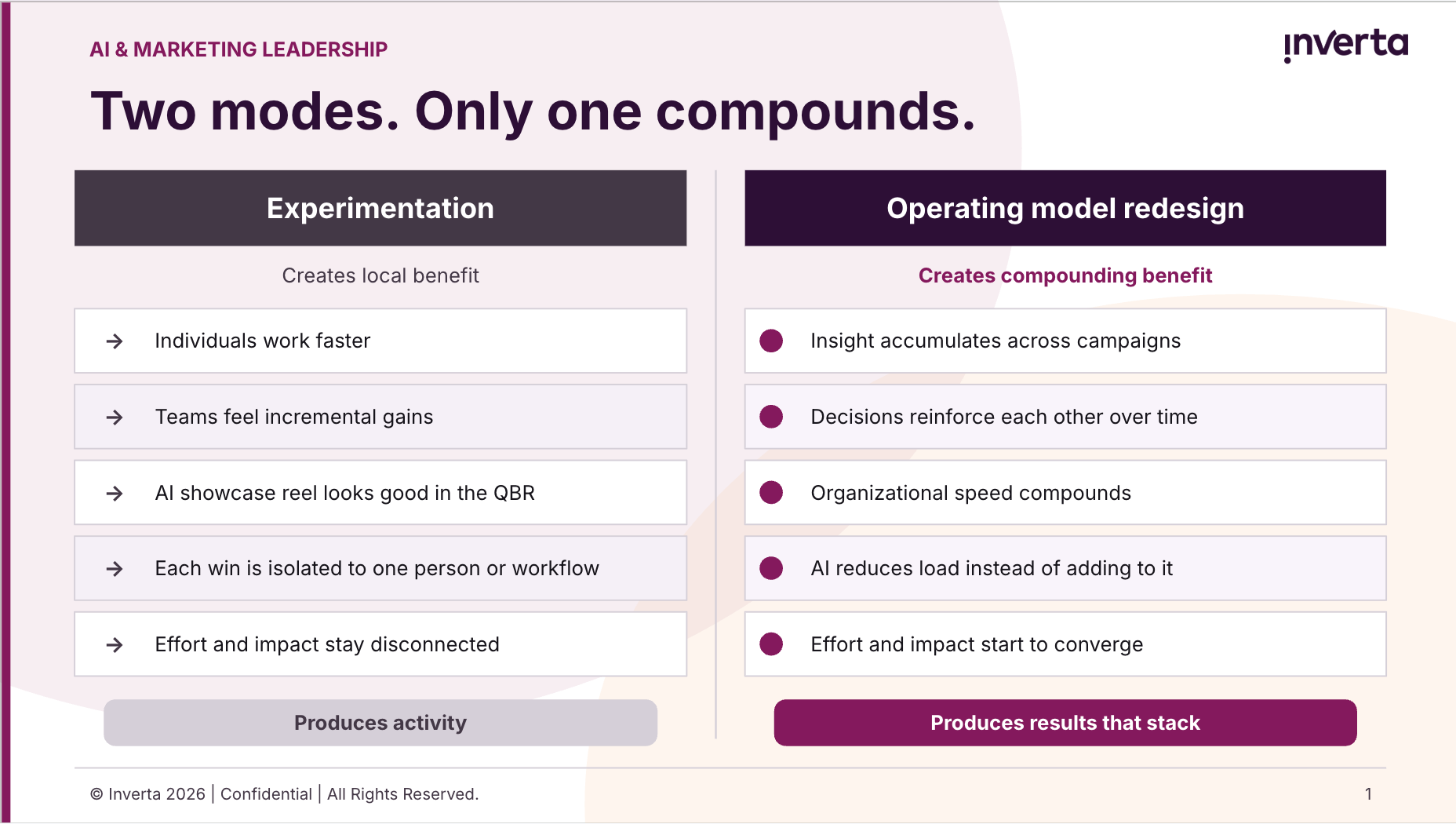

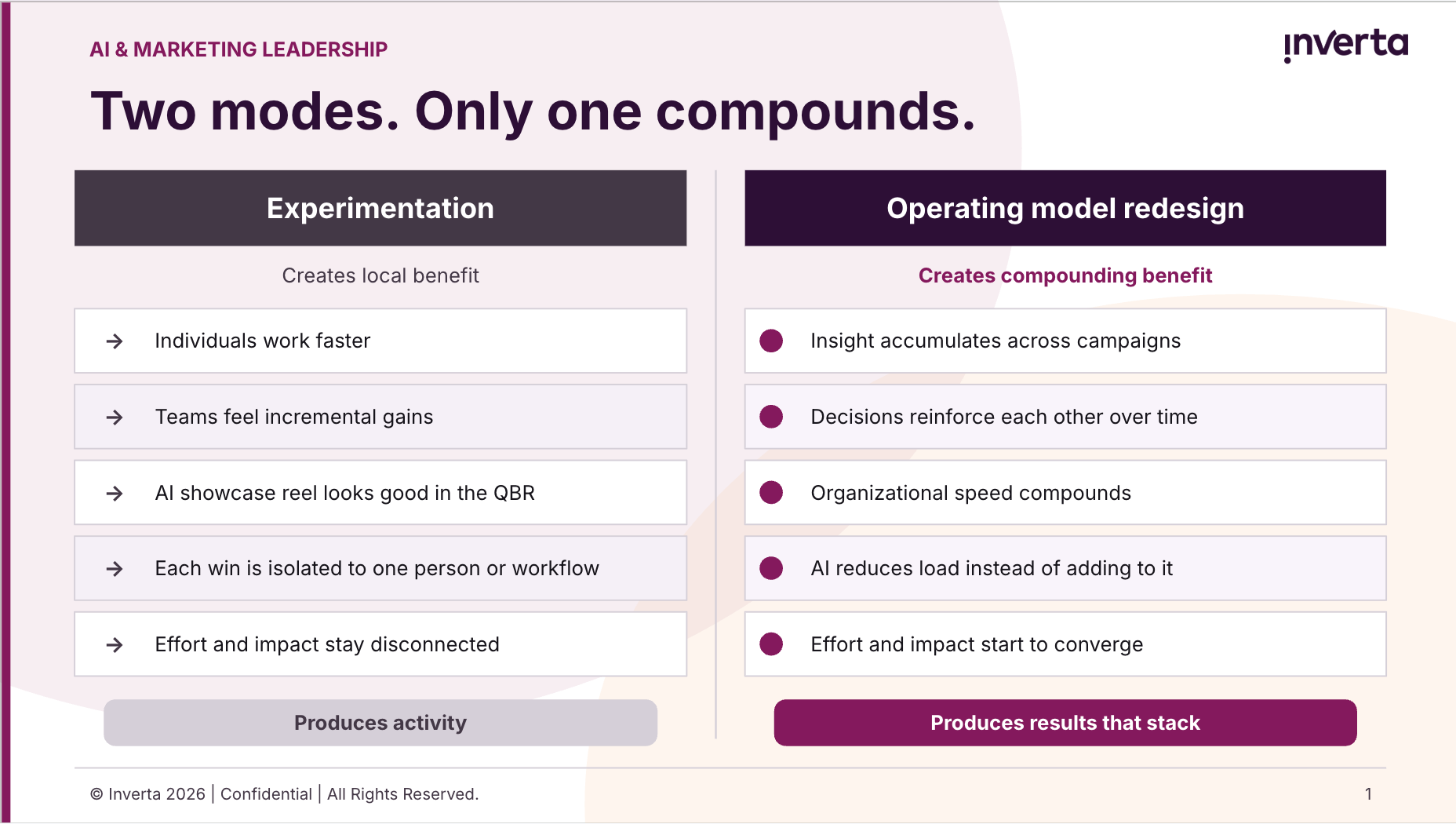

Experimentation creates local benefit: individuals work faster, teams feel incremental gains, and the AI showcase reel looks good in the QBR. Operating change creates compounding benefit: insight accumulates, decisions reinforce each other, and the organization actually gets faster over time. These are not the same thing. One produces activity. The other produces results that stack.

The teams that feel busy without getting better are caught in the first mode while hoping for the second. The move from one to the other isn't a tool decision. It's an operating model decision. Most organizations haven't made it yet.

How to start without a reorg

Here's the good news: you don't need to reorganize to start making real progress. You need one manageable place to begin, and the discipline to finish it before moving to the next thing.

Three moves that create visible, sustainable momentum. Notice that each one has a finish line. That's deliberate. The goal isn't to launch all three at once. It's to complete one before you touch the next.

- Standardize one workflow end to end. Pick something repeated, important, and currently inconsistent , a campaign brief, a demand gen cycle, a content approval process. Standardize the inputs, the judgment points, and the outputs. This is the prerequisite for AI to actually help. Without it, AI just speeds up the inconsistency.

- Relieve one real burden with an agent. Don't build the most ambitious agent you can imagine. Find the recurring task that consumes the most time relative to its strategic value and hand it off. Make the relief visible to the team. That changes the narrative around AI from 'extra work' to 'actual help.' That shift in team perception is not a nice-to-have. It's the thing that sustains momentum.

- Define 'good' for one critical input before scaling. What does a great brief look like? What makes a qualified signal worth acting on? Where must human judgment stay, and where can it be systematized? Clarity here is what separates teams that scale AI well from teams that simply produce more noise faster.

Notice what's not on this list: a new org structure, a six-month AI roadmap, or a budget request. The sequencing is the point. Each move creates the condition the next one requires. You can't relieve a burden with an agent if the underlying workflow is inconsistent. You can't scale what you haven't defined. One thing, finished, before the next.

One mid-market B2B team we worked with didn't start with a transformation plan. Their demand gen leader was frustrated: leadership kept pushing the team to "use AI more," but no one had removed any work. So a few managers quietly started using it anyway — faster briefs, call summaries, draft nurture sequences — while the same overloaded approval chains ran underneath.

Nothing compounded. It just got busier.

What changed wasn't a new tool or a new hire. Leadership finally named what was actually happening: the team wasn't resisting AI. They were protecting themselves from one more initiative. So they cut a handful of low-value meetings, standardized the campaign brief process, and pointed AI at two or three places where it visibly made people's jobs easier.

Adoption spread on its own after that. Not because of a mandate. Because the team finally had evidence that AI was working for them, not being piled on top of them.

The first job of a marketing AI leader

It isn't generating more ideas. It isn't launching more pilots. It isn't demanding more AI activity from an already maxed-out team.

It's creating enough space for better decisions about work.

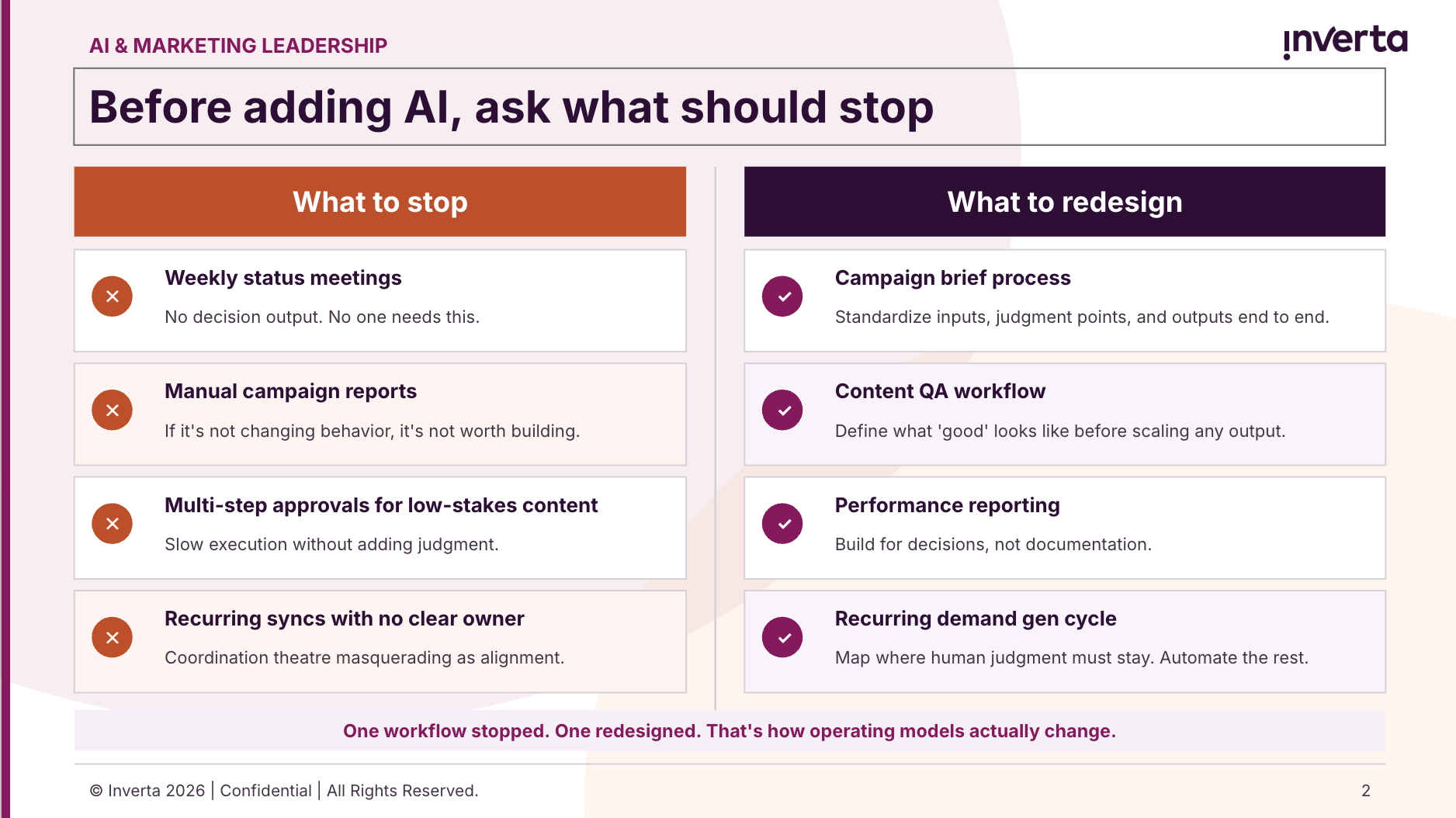

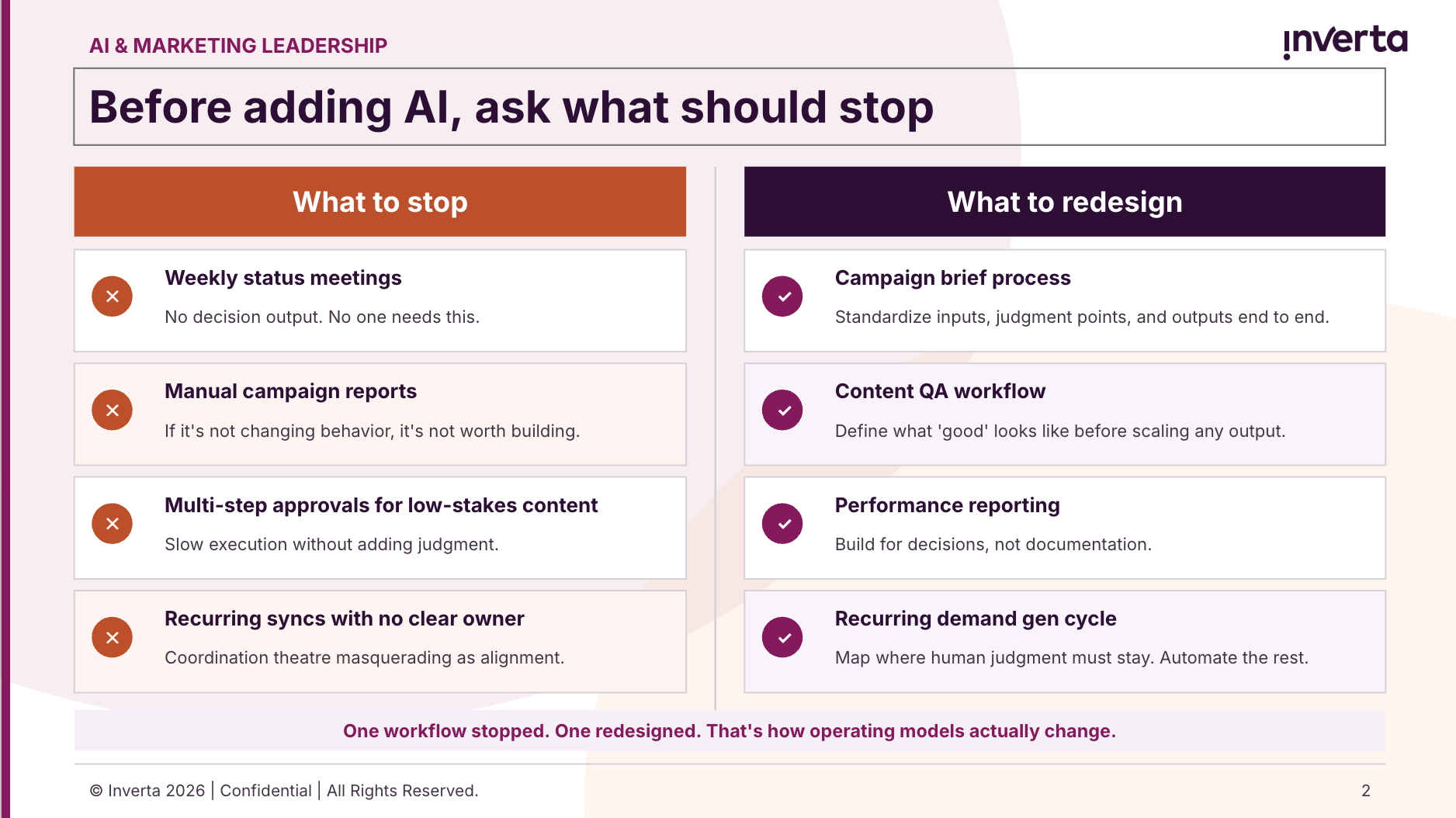

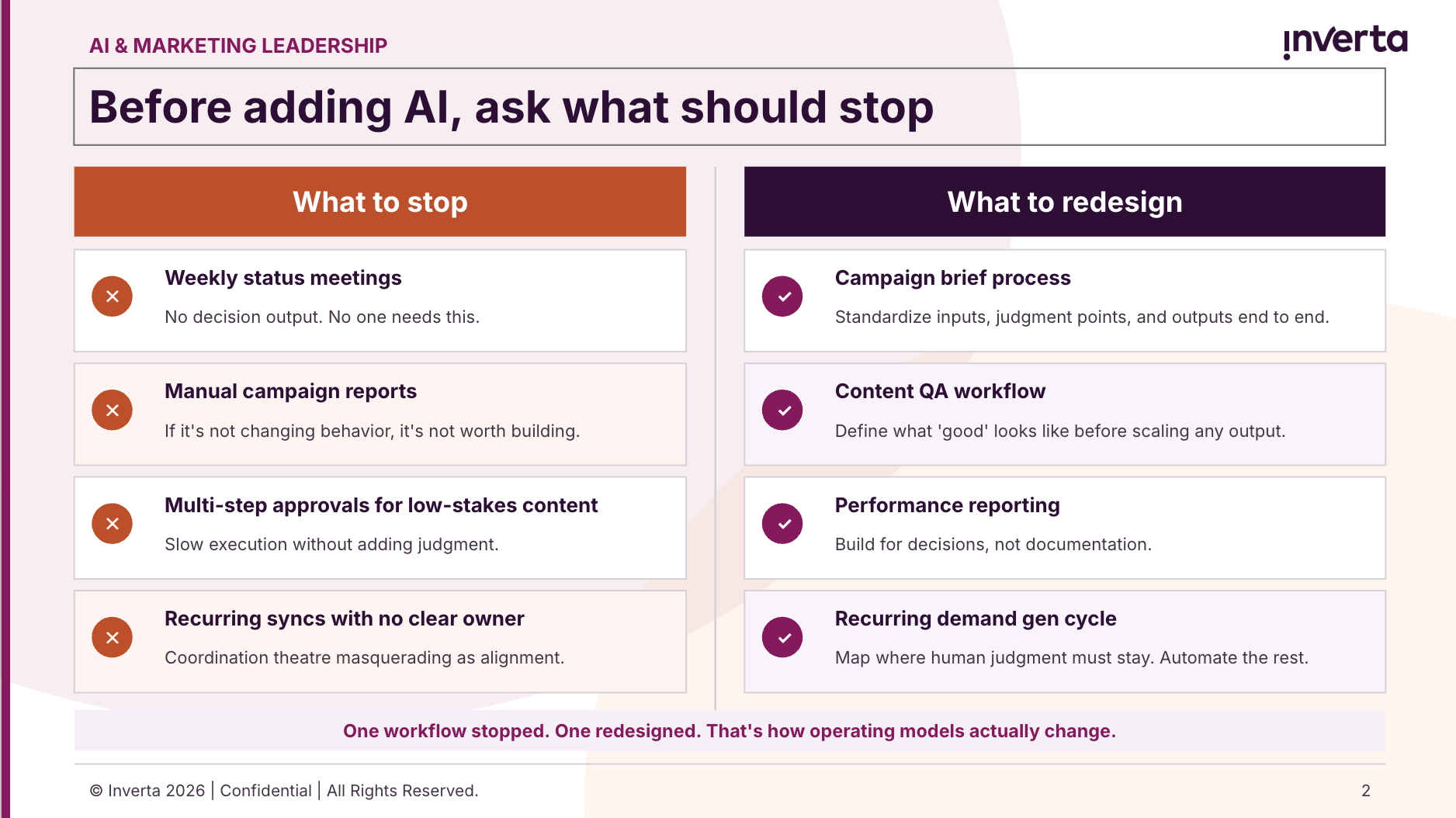

That means stopping before starting. Not everything deserves to continue. Recurring meetings with no decision output. Manual reports nobody reads. Approval chains that slow execution without adding judgment. These habits absorb attention without compounding value. And as long as they run at full speed, there is no room for the redesign work that AI actually requires.

Before you ask where agents belong, ask: What work can stop? What workflow can be standardized? What judgment must stay human? Is it currently protected, or getting lost in volume?

These aren't rhetorical questions. They're the starting point for a marketing operating model that AI can actually improve.

The CMOs who will win

They won't be the ones who generate the most AI activity from their teams.

They'll be the ones who created room to redesign one workflow, relieve one real burden, and make AI actually sustainable for the humans doing the work.

The teams that look back in two years and see compounding advantage won't be the ones that moved the fastest. They'll be the ones that moved deliberately. They picked one workflow and actually finished redesigning it. They handed off one recurring task to an agent and made the relief visible, not invisible. They defined what 'good' looks like for one important input before trying to scale anything.

Small moves, done completely, in sequence. That's how operating models actually change.

Not from skepticism to enthusiasm. From tool adoption to operating model redesign. That's the shift that matters, and it starts with giving your team somewhere real to begin, not more pressure to move faster.

If you're honest about where your team actually is with AI right now, not where you want to be, what's the real bottleneck? Capacity? Clarity about what to redesign first? Something else? I'm genuinely curious.

Want the full framework?

The Inverta AI Manifesto (Stop Adopting AI. Start Redesigning Marketing.) goes deeper on every principle in this article. It walks through how to redesign workflows, organize around outcomes, and build the capabilities that create compounding advantage over time.

If this article named something you've been living, the Manifesto is the next step. Download it today.

About the author

Service page feature

Artificial intelligence

When every seat is already full, AI adoption fails. Not from resistance, but from overload. Here's what CMOs who are making real progress do differently.

Most AI adoption efforts in marketing don't fail from resistance. They fail because the people expected to lead the change are already working at 150%.

I keep having a version of the same conversation with marketing leaders. A CMO comes back from an AI conference energized. She's not skeptical. She's ready to move. Her first call: 'We need agents.'

Then comes the hard part.

Her team is already maxed out. Campaigns are in flight. The pipeline number hasn't changed. And now AI is supposed to happen on top of all of it.

This is the tension more CMOs are navigating than are willing to admit. And it points to something most AI adoption frameworks get completely wrong.

The biggest barrier to AI success in marketing isn't lack of ideas or lack of tools. It's a lack of capacity to rethink how work gets done while the work keeps coming.

Why AI adoption stalls before it starts

When teams are stretched, they default to what feels actionable: buy tools, run training sessions, brainstorm use cases, build an agent.

None of this is wrong. But it's also how teams stay busy without getting better.

The pattern is consistent across B2B marketing organizations. AI experimentation is happening. Individuals are faster. Teams feel like they're moving. But the organization feels no more decisive, no more aligned, and no closer to results that accumulate over time. The gap between effort and impact keeps widening.

Why? Because AI doesn't correct broken processes. It amplifies whatever is already there, including the dysfunction.

If your workflows are fragmented, siloed, or unclear about who owns what judgment, AI makes those weaknesses run faster. Not better.

Most marketing organizations have approached AI like a productivity initiative. Individuals experiment. A few workflows improve. Nothing fundamentally changes. The constraint is rarely the technology. It's the operating model.

AI fatigue is real. Here's why it makes sense.

Here's what makes this harder than it looks: AI may be making individual tasks faster, and yet work can still feel heavier.

Speed raises expectations. When AI drafts a first pass in seconds, the expectation becomes: produce more, faster, with fewer resources. Time saved in one place gets immediately refilled with more output demands, more review work, more context switching, and more decision fatigue.

Teams can be both more productive and more exhausted at the same time. That's not a paradox. That's what happens when efficiency gains are reinvested into higher output demands without any deliberate change to how work is structured.

For CMOs leading AI adoption, this creates a specific challenge. You're asking already-stretched teams to learn new tools, redesign workflows, and adapt to new expectations while the campaigns still have to go out.

AI adoption fails when it becomes one more thing for overloaded teams to do. It succeeds when leaders redesign work so AI reduces load instead of adding to it.

The trap most leaders fall into

The instinctive response to AI underperformance is more activity: more tools, more training, more pilots.

But training doesn't redesign work. Documentation doesn't enforce standards. Hiring doesn't solve coordination. These moves feel productive because they're visible: they generate Slack threads, status updates, offsite energy. But they don't change how marketing actually operates. And operating change is the only kind that creates compounding benefit.

Here's the thing most marketing leaders won't say out loud: you've been running experiments. Your boss thinks that's the same as making progress. It isn't.

Experimentation creates local benefit: individuals work faster, teams feel incremental gains, and the AI showcase reel looks good in the QBR. Operating change creates compounding benefit: insight accumulates, decisions reinforce each other, and the organization actually gets faster over time. These are not the same thing. One produces activity. The other produces results that stack.

The teams that feel busy without getting better are caught in the first mode while hoping for the second. The move from one to the other isn't a tool decision. It's an operating model decision. Most organizations haven't made it yet.

How to start without a reorg

Here's the good news: you don't need to reorganize to start making real progress. You need one manageable place to begin, and the discipline to finish it before moving to the next thing.

Three moves that create visible, sustainable momentum. Notice that each one has a finish line. That's deliberate. The goal isn't to launch all three at once. It's to complete one before you touch the next.

- Standardize one workflow end to end. Pick something repeated, important, and currently inconsistent , a campaign brief, a demand gen cycle, a content approval process. Standardize the inputs, the judgment points, and the outputs. This is the prerequisite for AI to actually help. Without it, AI just speeds up the inconsistency.

- Relieve one real burden with an agent. Don't build the most ambitious agent you can imagine. Find the recurring task that consumes the most time relative to its strategic value and hand it off. Make the relief visible to the team. That changes the narrative around AI from 'extra work' to 'actual help.' That shift in team perception is not a nice-to-have. It's the thing that sustains momentum.

- Define 'good' for one critical input before scaling. What does a great brief look like? What makes a qualified signal worth acting on? Where must human judgment stay, and where can it be systematized? Clarity here is what separates teams that scale AI well from teams that simply produce more noise faster.

Notice what's not on this list: a new org structure, a six-month AI roadmap, or a budget request. The sequencing is the point. Each move creates the condition the next one requires. You can't relieve a burden with an agent if the underlying workflow is inconsistent. You can't scale what you haven't defined. One thing, finished, before the next.

One mid-market B2B team we worked with didn't start with a transformation plan. Their demand gen leader was frustrated: leadership kept pushing the team to "use AI more," but no one had removed any work. So a few managers quietly started using it anyway — faster briefs, call summaries, draft nurture sequences — while the same overloaded approval chains ran underneath.

Nothing compounded. It just got busier.

What changed wasn't a new tool or a new hire. Leadership finally named what was actually happening: the team wasn't resisting AI. They were protecting themselves from one more initiative. So they cut a handful of low-value meetings, standardized the campaign brief process, and pointed AI at two or three places where it visibly made people's jobs easier.

Adoption spread on its own after that. Not because of a mandate. Because the team finally had evidence that AI was working for them, not being piled on top of them.

The first job of a marketing AI leader

It isn't generating more ideas. It isn't launching more pilots. It isn't demanding more AI activity from an already maxed-out team.

It's creating enough space for better decisions about work.

That means stopping before starting. Not everything deserves to continue. Recurring meetings with no decision output. Manual reports nobody reads. Approval chains that slow execution without adding judgment. These habits absorb attention without compounding value. And as long as they run at full speed, there is no room for the redesign work that AI actually requires.

Before you ask where agents belong, ask: What work can stop? What workflow can be standardized? What judgment must stay human? Is it currently protected, or getting lost in volume?

These aren't rhetorical questions. They're the starting point for a marketing operating model that AI can actually improve.

The CMOs who will win

They won't be the ones who generate the most AI activity from their teams.

They'll be the ones who created room to redesign one workflow, relieve one real burden, and make AI actually sustainable for the humans doing the work.

The teams that look back in two years and see compounding advantage won't be the ones that moved the fastest. They'll be the ones that moved deliberately. They picked one workflow and actually finished redesigning it. They handed off one recurring task to an agent and made the relief visible, not invisible. They defined what 'good' looks like for one important input before trying to scale anything.

Small moves, done completely, in sequence. That's how operating models actually change.

Not from skepticism to enthusiasm. From tool adoption to operating model redesign. That's the shift that matters, and it starts with giving your team somewhere real to begin, not more pressure to move faster.

If you're honest about where your team actually is with AI right now, not where you want to be, what's the real bottleneck? Capacity? Clarity about what to redesign first? Something else? I'm genuinely curious.

Want the full framework?

The Inverta AI Manifesto (Stop Adopting AI. Start Redesigning Marketing.) goes deeper on every principle in this article. It walks through how to redesign workflows, organize around outcomes, and build the capabilities that create compounding advantage over time.

If this article named something you've been living, the Manifesto is the next step. Download it today.

Resources

About the author

Service page feature

Artificial intelligence

Your marketing team isn't resisting AI. They're out of capacity.

Speakers

Other helpful resources

When every seat is already full, AI adoption fails. Not from resistance, but from overload. Here's what CMOs who are making real progress do differently.

Most AI adoption efforts in marketing don't fail from resistance. They fail because the people expected to lead the change are already working at 150%.

I keep having a version of the same conversation with marketing leaders. A CMO comes back from an AI conference energized. She's not skeptical. She's ready to move. Her first call: 'We need agents.'

Then comes the hard part.

Her team is already maxed out. Campaigns are in flight. The pipeline number hasn't changed. And now AI is supposed to happen on top of all of it.

This is the tension more CMOs are navigating than are willing to admit. And it points to something most AI adoption frameworks get completely wrong.

The biggest barrier to AI success in marketing isn't lack of ideas or lack of tools. It's a lack of capacity to rethink how work gets done while the work keeps coming.

Why AI adoption stalls before it starts

When teams are stretched, they default to what feels actionable: buy tools, run training sessions, brainstorm use cases, build an agent.

None of this is wrong. But it's also how teams stay busy without getting better.

The pattern is consistent across B2B marketing organizations. AI experimentation is happening. Individuals are faster. Teams feel like they're moving. But the organization feels no more decisive, no more aligned, and no closer to results that accumulate over time. The gap between effort and impact keeps widening.

Why? Because AI doesn't correct broken processes. It amplifies whatever is already there, including the dysfunction.

If your workflows are fragmented, siloed, or unclear about who owns what judgment, AI makes those weaknesses run faster. Not better.

Most marketing organizations have approached AI like a productivity initiative. Individuals experiment. A few workflows improve. Nothing fundamentally changes. The constraint is rarely the technology. It's the operating model.

AI fatigue is real. Here's why it makes sense.

Here's what makes this harder than it looks: AI may be making individual tasks faster, and yet work can still feel heavier.

Speed raises expectations. When AI drafts a first pass in seconds, the expectation becomes: produce more, faster, with fewer resources. Time saved in one place gets immediately refilled with more output demands, more review work, more context switching, and more decision fatigue.

Teams can be both more productive and more exhausted at the same time. That's not a paradox. That's what happens when efficiency gains are reinvested into higher output demands without any deliberate change to how work is structured.

For CMOs leading AI adoption, this creates a specific challenge. You're asking already-stretched teams to learn new tools, redesign workflows, and adapt to new expectations while the campaigns still have to go out.

AI adoption fails when it becomes one more thing for overloaded teams to do. It succeeds when leaders redesign work so AI reduces load instead of adding to it.

The trap most leaders fall into

The instinctive response to AI underperformance is more activity: more tools, more training, more pilots.

But training doesn't redesign work. Documentation doesn't enforce standards. Hiring doesn't solve coordination. These moves feel productive because they're visible: they generate Slack threads, status updates, offsite energy. But they don't change how marketing actually operates. And operating change is the only kind that creates compounding benefit.

Here's the thing most marketing leaders won't say out loud: you've been running experiments. Your boss thinks that's the same as making progress. It isn't.

Experimentation creates local benefit: individuals work faster, teams feel incremental gains, and the AI showcase reel looks good in the QBR. Operating change creates compounding benefit: insight accumulates, decisions reinforce each other, and the organization actually gets faster over time. These are not the same thing. One produces activity. The other produces results that stack.

The teams that feel busy without getting better are caught in the first mode while hoping for the second. The move from one to the other isn't a tool decision. It's an operating model decision. Most organizations haven't made it yet.

How to start without a reorg

Here's the good news: you don't need to reorganize to start making real progress. You need one manageable place to begin, and the discipline to finish it before moving to the next thing.

Three moves that create visible, sustainable momentum. Notice that each one has a finish line. That's deliberate. The goal isn't to launch all three at once. It's to complete one before you touch the next.

- Standardize one workflow end to end. Pick something repeated, important, and currently inconsistent , a campaign brief, a demand gen cycle, a content approval process. Standardize the inputs, the judgment points, and the outputs. This is the prerequisite for AI to actually help. Without it, AI just speeds up the inconsistency.

- Relieve one real burden with an agent. Don't build the most ambitious agent you can imagine. Find the recurring task that consumes the most time relative to its strategic value and hand it off. Make the relief visible to the team. That changes the narrative around AI from 'extra work' to 'actual help.' That shift in team perception is not a nice-to-have. It's the thing that sustains momentum.

- Define 'good' for one critical input before scaling. What does a great brief look like? What makes a qualified signal worth acting on? Where must human judgment stay, and where can it be systematized? Clarity here is what separates teams that scale AI well from teams that simply produce more noise faster.

Notice what's not on this list: a new org structure, a six-month AI roadmap, or a budget request. The sequencing is the point. Each move creates the condition the next one requires. You can't relieve a burden with an agent if the underlying workflow is inconsistent. You can't scale what you haven't defined. One thing, finished, before the next.

One mid-market B2B team we worked with didn't start with a transformation plan. Their demand gen leader was frustrated: leadership kept pushing the team to "use AI more," but no one had removed any work. So a few managers quietly started using it anyway — faster briefs, call summaries, draft nurture sequences — while the same overloaded approval chains ran underneath.

Nothing compounded. It just got busier.

What changed wasn't a new tool or a new hire. Leadership finally named what was actually happening: the team wasn't resisting AI. They were protecting themselves from one more initiative. So they cut a handful of low-value meetings, standardized the campaign brief process, and pointed AI at two or three places where it visibly made people's jobs easier.

Adoption spread on its own after that. Not because of a mandate. Because the team finally had evidence that AI was working for them, not being piled on top of them.

The first job of a marketing AI leader

It isn't generating more ideas. It isn't launching more pilots. It isn't demanding more AI activity from an already maxed-out team.

It's creating enough space for better decisions about work.

That means stopping before starting. Not everything deserves to continue. Recurring meetings with no decision output. Manual reports nobody reads. Approval chains that slow execution without adding judgment. These habits absorb attention without compounding value. And as long as they run at full speed, there is no room for the redesign work that AI actually requires.

Before you ask where agents belong, ask: What work can stop? What workflow can be standardized? What judgment must stay human? Is it currently protected, or getting lost in volume?

These aren't rhetorical questions. They're the starting point for a marketing operating model that AI can actually improve.

The CMOs who will win

They won't be the ones who generate the most AI activity from their teams.

They'll be the ones who created room to redesign one workflow, relieve one real burden, and make AI actually sustainable for the humans doing the work.

The teams that look back in two years and see compounding advantage won't be the ones that moved the fastest. They'll be the ones that moved deliberately. They picked one workflow and actually finished redesigning it. They handed off one recurring task to an agent and made the relief visible, not invisible. They defined what 'good' looks like for one important input before trying to scale anything.

Small moves, done completely, in sequence. That's how operating models actually change.

Not from skepticism to enthusiasm. From tool adoption to operating model redesign. That's the shift that matters, and it starts with giving your team somewhere real to begin, not more pressure to move faster.

If you're honest about where your team actually is with AI right now, not where you want to be, what's the real bottleneck? Capacity? Clarity about what to redesign first? Something else? I'm genuinely curious.

Want the full framework?

The Inverta AI Manifesto (Stop Adopting AI. Start Redesigning Marketing.) goes deeper on every principle in this article. It walks through how to redesign workflows, organize around outcomes, and build the capabilities that create compounding advantage over time.

If this article named something you've been living, the Manifesto is the next step. Download it today.

About the author

Service page feature